Why AI code generation falls short for enterprise workflows

AI code generation accelerates parts of development, but enterprise applications need more than faster code. Workflow orchestration, governance, multi-environment deployment, and visual transparency are platform problems that code generators don’t address.

GitHub Copilot can scaffold a REST API in seconds. Claude can refactor a function and write test cases that would take a developer an hour to produce manually. Google’s CEO Sundar Pichai reported during the company’s Q3 2024 earnings call that over 25% of Google’s new code is now AI-generated. AI code generation has earned its place in the development toolkit, and the productivity gains for certain tasks are real.

But generating code and shipping enterprise applications are two very different things. An AI tool can write a data validation function. It cannot orchestrate a procurement workflow that spans your ERP, your CRM, and a custom approval system, with branching logic based on request amount, department, and vendor status. It cannot deploy that workflow across development, staging, and production environments. And it cannot give your compliance team an auditable trail of every business rule, data flow, and permission boundary in the system.

Enterprise teams face a familiar tension: pressure to deliver custom applications faster, and a growing set of tools that promise to help. AI code generation addresses part of the problem. But for business-critical applications, the gap between “generated code” and “production-ready enterprise workflow” remains wide.

Where AI code generation delivers

Let’s be clear about what these tools do well.

In controlled experiments, GitHub found that developers using Copilot completed tasks 55% faster than those without it. For boilerplate generation, test scaffolding, refactoring, and learning unfamiliar frameworks, AI assistants reduce friction. They help developers stay in flow and spend less time on repetitive patterns.

These are genuine, measurable gains. Any honest assessment of AI code generation has to start here, because the tools work well for the tasks they were designed for: helping individual developers write and iterate on code faster.

For enterprise teams, the productivity gains from faster code are real. The harder problem is whether faster code is sufficient for the applications your organization actually needs to build, deploy, and maintain.

The enterprise reality

Enterprise applications don’t live in isolation. They connect to existing systems, enforce business rules across departments, serve hundreds or thousands of users with different permission levels, and operate under regulatory requirements that demand auditability. AI code generation, as it exists today, addresses almost none of these concerns.

Large codebases break the model

A July 2025 study from MIT’s Computer Science and Artificial Intelligence Laboratory, titled “Challenges and Paths Towards AI for Software Engineering,” mapped the gap between what AI can do with code and what real software engineering demands.

The researchers found that current AI models struggle with large, interconnected codebases. Foundation models are trained on public repositories, but as MIT graduate student Alex Gu noted, “every company’s code base is kind of different and unique,” making proprietary conventions and architecture fundamentally out of distribution. That produces code that looks plausible but calls non-existent functions, violates internal style rules, or fails CI pipelines.

MIT professor Armando Solar-Lezama put it bluntly: popular narratives reduce software engineering to “the undergrad programming part: someone hands you a spec for a little function and you implement it.” Real enterprise software engineering is broader. It includes refactoring, migration, testing, documentation, code review, and maintenance. These are tasks where AI tools have far less to offer.

Speed without structure creates debt

Generating code faster sounds like pure upside. The data tells a more complicated story.

GitClear’s 2025 report analyzed 211 million lines of code across five years (2020–2024) from repositories owned by companies including Google, Microsoft, and Meta. They found that as AI assistant adoption grew, code quality metrics shifted in concerning ways. The share of copy-pasted code surged from 8.3% in 2020 to 12.3% in 2024, while refactored (or “moved”) lines dropped from 24.1% to just 9.5% over the same period. The proportion of new code revised within two weeks of its initial commit nearly doubled, from 3.1% to 5.7%.

In other words, teams are writing more code but maintaining it less carefully. That’s technical debt accumulating at an accelerated rate.

Google’s own 2024 DORA (DevOps Research and Assessment) report confirmed the pattern. Their analysis found that for every 25% increase in AI adoption, delivery stability decreased by an estimated 7.2%. AI boosted individual productivity and job satisfaction, but the team-level outcomes told a different story.

AI-generated code introduces more defects

A December 2025 report from CodeRabbit, analyzing 470 open-source GitHub pull requests, found that AI-authored PRs contained approximately 1.7 times more issues than human-written ones. Logic and correctness errors were 75% more common. Security issues were up to 2.74 times higher. Error handling gaps, the kind that cause real-world outages, appeared nearly twice as often.

These aren’t exotic edge cases. They’re the everyday quality problems that enterprise teams already spend significant effort preventing: missing null checks, incorrect dependency ordering, insecure credential handling, and flawed control flow.

Experienced developers don’t always benefit

Perhaps the most counterintuitive finding comes from METR’s randomized controlled trial, published in July 2025. The study measured how AI tools affected experienced open-source developers working on their own repositories, codebases they knew intimately, with an average of five years of prior experience per project.

Developers using AI tools took 19% longer to complete their tasks than those working without AI assistance.

The researchers offered several explanations: time spent reviewing and correcting AI output, context-switching between AI suggestions and existing code patterns, and the overhead of guiding the AI toward project-specific conventions. For developers who already know their codebase well, the assistance can become a distraction.

What enterprise applications actually require

The pattern across all of this research points in one direction: code generation solves one part of the problem. Enterprise applications require much more.

Visual transparency for non-technical stakeholders

Business stakeholders, product owners, and compliance teams need to understand how an application works. They need to verify that business rules are implemented correctly, that data flows match regulatory requirements, and that permissions align with organizational policy.

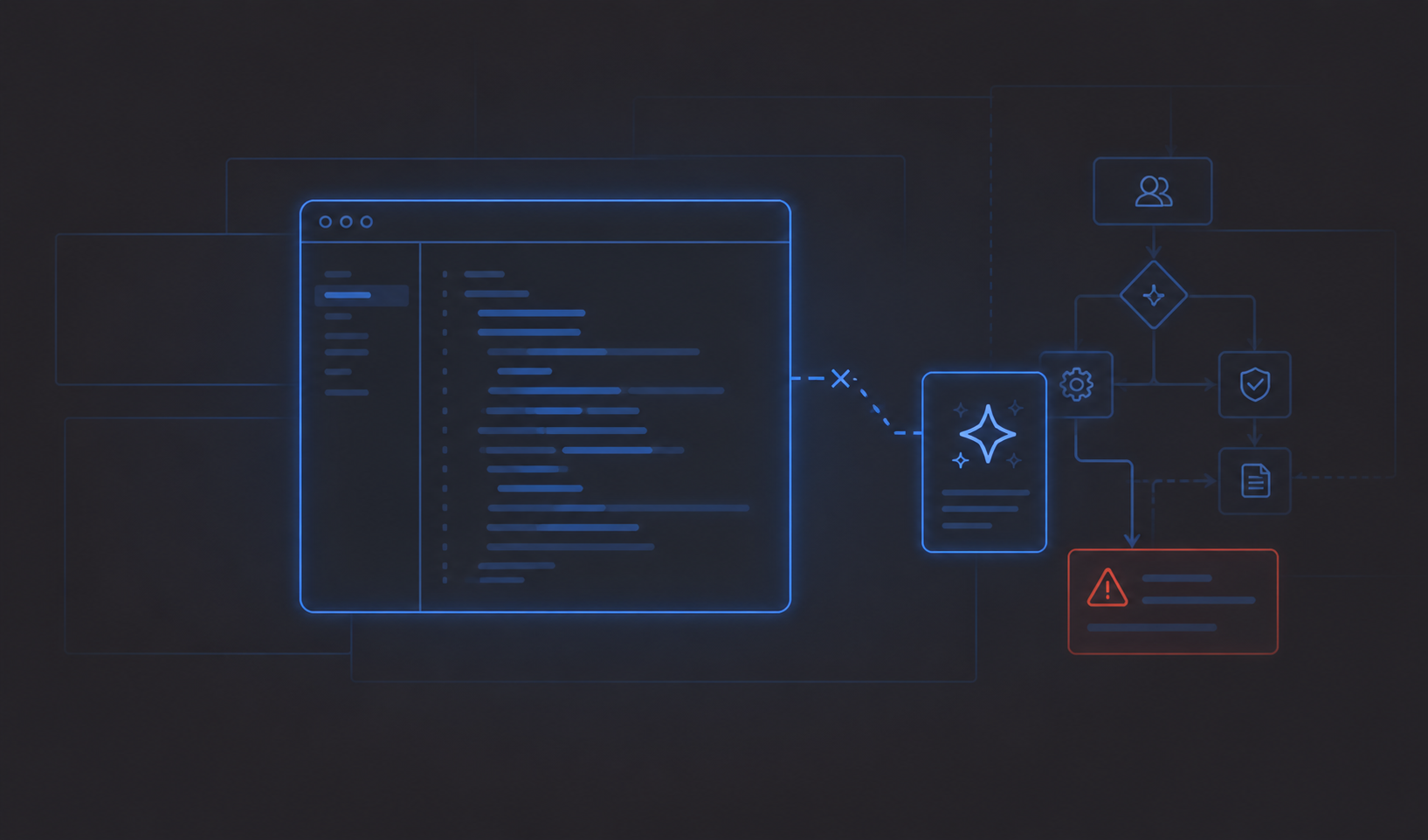

AI-generated code is opaque to anyone who doesn’t read code. In traditional development, this means business logic gets buried in files that only developers can parse. When something goes wrong, or when an auditor asks how a specific rule is enforced, the answer requires an archaeological dig through the codebase.

Visual development platforms address this directly. When application logic, data models, and integrations are represented as visible, editable components on a canvas, anyone involved in the project can review and validate what’s been built. That’s not a nice-to-have for enterprise teams. It’s a requirement.

Workflow orchestration, not just functions

Enterprise applications are defined by their workflows: multi-step approval chains, conditional routing based on user roles and data, integration with external systems, automated notifications, error handling, and exception management.

AI code generators produce individual functions and code blocks. They don’t orchestrate end-to-end business processes. Building a procurement approval workflow that routes differently based on purchase amount, vendor tier, and department budget requires understanding how multiple systems interact, what data transformations happen between them, and what happens when any step fails. That’s workflow orchestration, and it lives above the level of code.

Governance and compliance by design

Enterprise compliance frameworks (ISO 27001, SOC 2, GDPR) require documentation, audit trails, and systematic access controls. These can’t be bolted on after the fact.

AI-generated code creates a specific governance challenge: tracking which code was AI-generated versus manually written, reviewing that code for security vulnerabilities, and ensuring it meets organizational standards. The Cloud Security Alliance noted that AI coding assistants don’t inherently understand your application’s risk model, internal standards, or threat landscape. That understanding has to come from the platform, the process, or the people reviewing the output.

Platforms built for enterprise use can address this structurally: role-based access control, conditional permissions, audit logging, and deployment workflows that enforce review gates before anything reaches production.

Multi-environment deployment

A working prototype on a developer’s machine is not a production application. Enterprise software moves through defined environments (development, testing, staging, production) with version control, rollback capability, and deployment approvals at each stage.

AI code generators don’t touch any of this. They produce code. Getting that code into production safely, and being able to roll it back when something breaks, requires infrastructure and process that sits entirely outside the scope of what code generation tools provide.

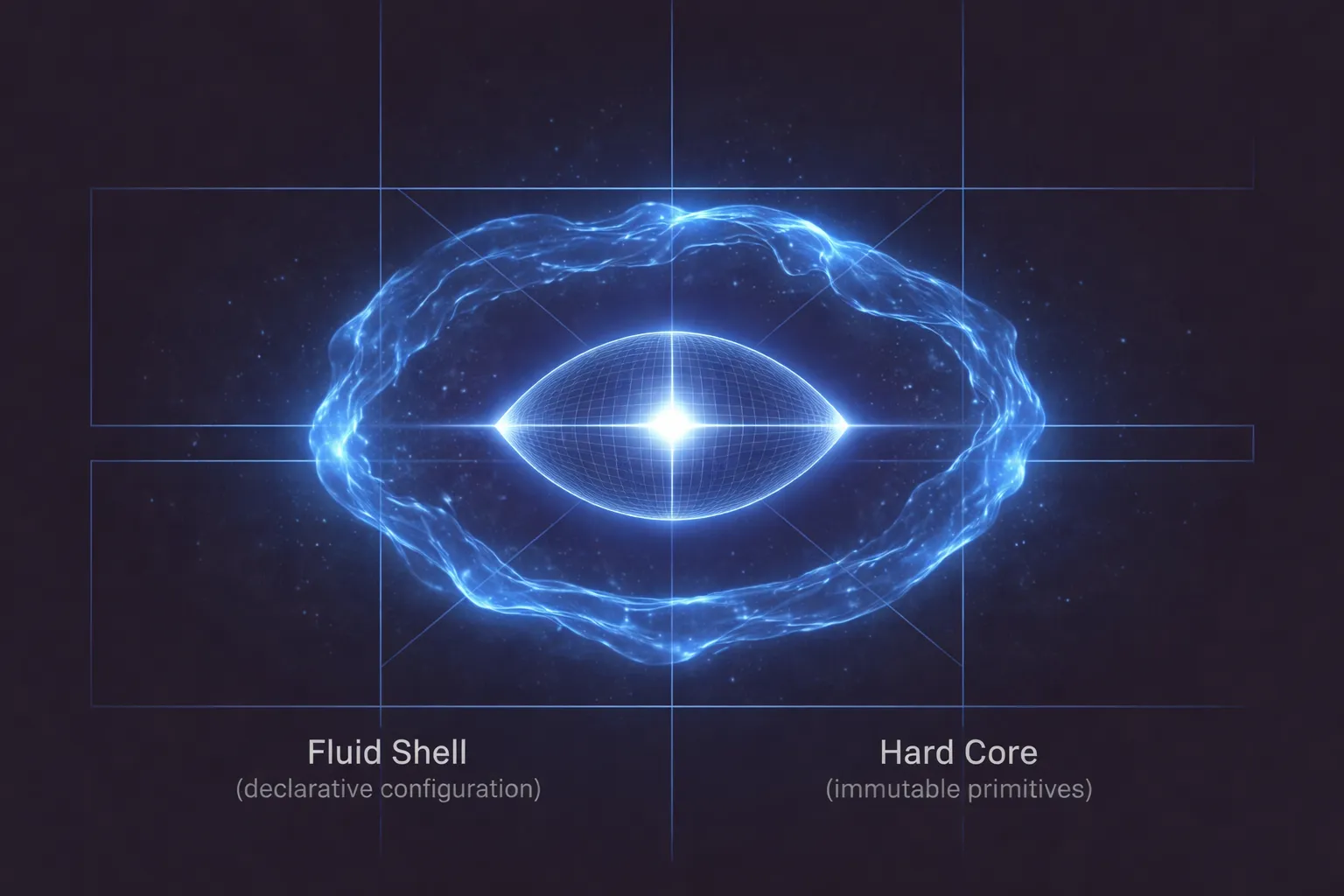

A better model: AI inside enterprise structure

The question isn’t whether to use AI in application development. It’s how to use it within a framework that addresses governance, collaboration, deployment, and workflow requirements.

This is the approach we’ve taken with Appfarm AI. Rather than treating AI as a standalone code generator, it works within Appfarm Create’s visual development environment. When you describe an application in natural language, the AI generates a plan first, not code. You review the plan, refine the scope, and then the AI builds using the same visual components that developers work with directly. Every component the AI creates is visible on the canvas, fully editable, and subject to the same permission, deployment, and governance controls as anything built manually.

That distinction matters because it shifts the accountability model. With standalone AI code generation, the user or developer is responsible for everything that happens after the code appears: security review, architecture, testing, deployment, rollback, compliance evidence, and long-term maintainability. In an enterprise platform model, more of that responsibility sits with the platform vendor. The AI accelerates the building process, while the platform provides the structure around it: multi-environment deployment with one-click promotion and instant rollback, role-based access control with conditional permissions, real-time multiplayer development so teams can collaborate without merge conflicts, and visual transparency so business stakeholders can validate logic without reading code.

Appfarm’s ISO 27001 certification also matters here. For organizations building business-critical workflows, speed isn’t the only thing that matters. They need to move faster inside a governed environment that supports security, compliance, deployment discipline, and vendor accountability. That’s a different proposition from asking a code generator to produce an application and leaving the organization to assemble the operating model around it.

The goal isn’t to choose between AI and structure. It’s to combine AI assistance with the governance, orchestration, and deployment capabilities that enterprise applications demand.

Looking forward

AI code generation will continue to improve. Context windows will get larger. Models will get better at understanding proprietary codebases and enforcing coding standards. The research community is actively working on these problems.

But even as AI code generation gets better at writing code, the gap between code and a complete enterprise application will persist. Workflow orchestration, multi-user collaboration, compliance governance, and deployment management are not code generation problems. They’re platform problems.

For teams evaluating how to build business-critical applications, the productivity question goes beyond lines of code per hour. It includes how quickly you can move from concept to production, how confidently non-technical stakeholders can validate business logic, how reliably you can deploy and roll back changes, and how completely you can satisfy compliance requirements.

This is especially important for organizations that have not invested in large internal development teams. If you already have a mature engineering organization shipping software every day, AI code generation may fit naturally into that workflow. But many companies still need custom workflows, operational applications, and industry-specific systems without wanting to become software companies themselves. For those teams, a governed platform with AI assistance and vendor accountability is a more practical path than generating code and taking on the full burden of owning it.

AI code generation is a valuable tool for developers. Enterprise applications need a platform.

More reading

There are no items matching your filters. Please try another filter to view results.

We have a lot of helpful resources. Try another filter or reset the filters to find the resource for you.

Digital transformation without disruption